Latency as Alpha

How HFTs Could Spark a Market Scandal

I want to start by wishing you all a Merry Christmas and a happy New Year! This will be the last post of the year, and I want to thank everyone who has continued reading my work, I hope I’ve lived up to your expectations. The coming year will bring many exciting developments to this blog, including the launch of a financial podcast, videos and interviews with various specialists, private chats, and much more, all of which I’ll share in detail at the right time. But until then, take care of yourselves!

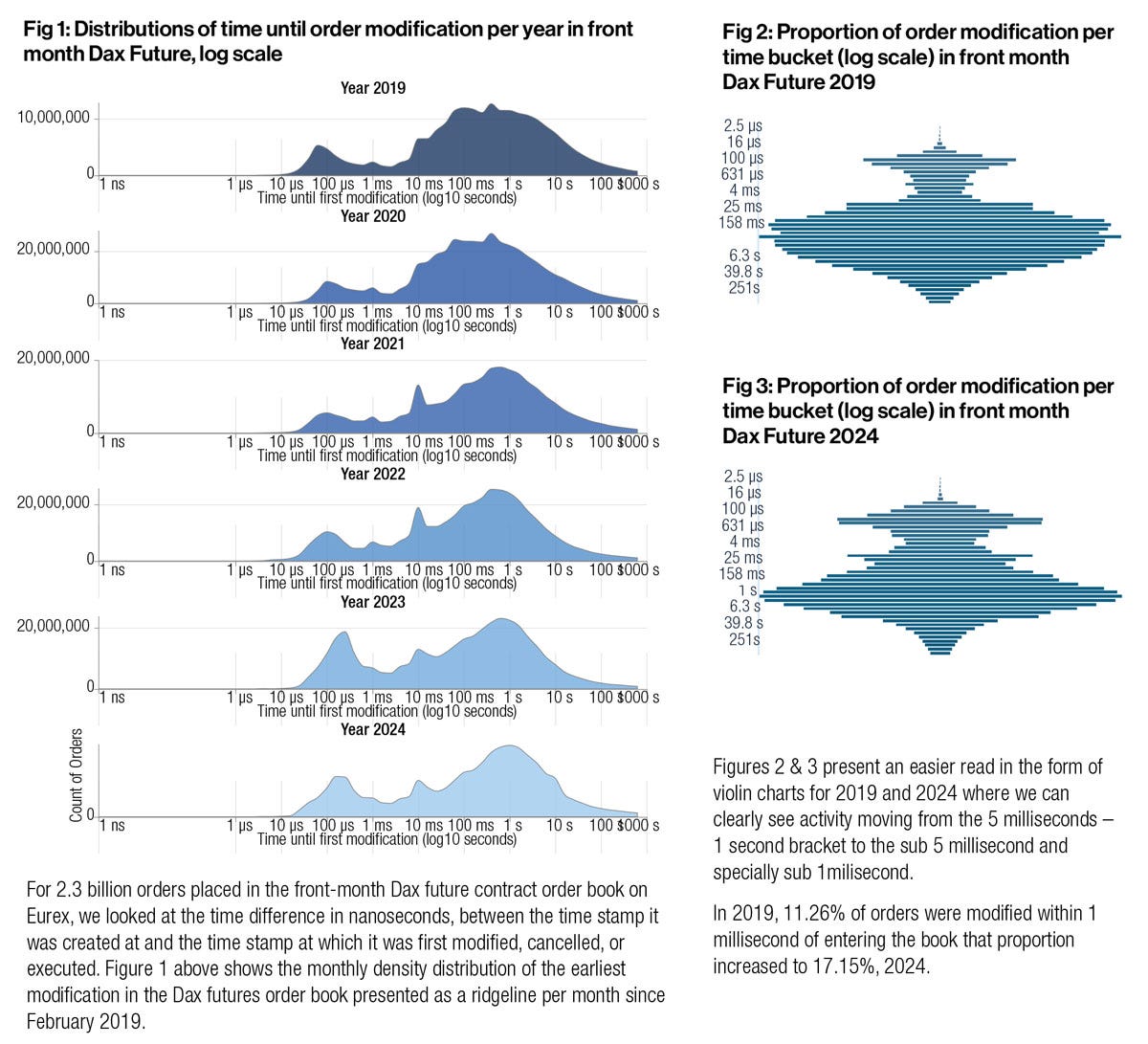

A millisecond used to be a meaningful unit of time in electronic markets. For today’s fastest traders, it is effectively an eternity. In modern high-frequency trading (HFT), profitability is increasingly determined at the level of microseconds and even nanoseconds, where marginal differences in latency decide which algorithm trades first and which is left interacting with a stale price. A recent dispute (from this month) involving Eurex, one of the world’s largest derivatives exchanges, shows how far this race has progressed and why it has begun to attract regulatory scrutiny (for example, the figure below shows how even a single millisecond can be decisive when placing/ withdrawing an order from the order book). This is precisely why it seems paradoxical to me that so little attention has been paid to this issue recently.

Source: GlobalTrading

What is this controversy about? The controversy centers on allegations by the French trading firm Mosaic Finance that certain HFT firms are exploiting a technical feature of Eurex’s market infrastructure to gain fractional speed advantages. This discussion dates back to March, when the first allegations against Eurex were raised. Mosaic has formally urged the European Commission to investigate Eurex, arguing that rival firms use what it describes as “corrupted speculative triggering” (or CST) to maintain their connections in a perpetually primed state. According to Mosaic, this involves flooding the exchange with incomplete or deliberately malformed data packets that serve no trading purpose but keep network pathways active, allowing those firms to respond fractionally faster when genuine market-moving information arrives. Mosaic claims that this practice, which it argues violates the spirit if not the letter of exchange rules, has generated as much as €600 million in excess profits over the past three years. Eurex has rejected these accusations as unfounded, yet it has also announced a systems upgrade this month that market participants widely expect will make such tactics ineffective (I will get to this point in a second).

To understand why a few nanoseconds matter, it is necessary to understand how modern electronic markets process information. Orders are transmitted as packets across networks, passing through switches, network interface cards, but also exchange matching engines. Even when two traders receive the same public information at effectively the same moment, the one whose order reaches the matching engine first captures the trade. High-frequency strategies such as latency arbitrage or queue positioning rely on reacting to price changes in correlated instruments before competitors can adjust their quotes. Academic work has repeatedly shown that speed advantages translate directly into economic rents in continuous limit order markets, particularly when information arrives discretely but is processed asynchronously (as discussed, by example, in this paper).

What Mosaic describes as corrupted speculative triggering is best understood as an attempt to optimize the physical and protocol-level behavior of networks. By sending partial packets or malformed messages that initiate the early stages of communication without completing a valid order, firms may reduce latency associated with buffering and/or packet assembly. These techniques operate at the boundary between legitimate (optimization) and exploitative (behavior). They do not rely on superior forecasts or risk-taking, but on detailed knowledge of how exchange systems handle traffic under extreme load. Similar concerns have surfaced before in discussions of message stuffing and quote flooding (as discussed by US Securities and Exchange Commission), practices regulators have sought to curb because they degrade market quality while benefiting a narrow subset of participants.

What gives the Eurex dispute particular significance is that it appears to have triggered a concrete technical response at the exchange level. According to industry sources cited by Global Trading, Eurex plans to shift its monitoring framework from limits based on Ethernet frames to limits enforced at the packet level across its co-location network starting in April 2026. While Eurex has framed this change as a clarification rather than a prohibition, market participants interpret it as effectively closing the loophole that allegedly enabled CST-style techniques. Under packet-level monitoring, all traffic detected at the physical layer (including incomplete or malformed packets) counts toward message thresholds, making it far harder to pre-stream traffic without triggering exchange controls. In practice, this represents a shift toward regulating the micro-behavior of network traffic itself (not just order flow).

The significance of this shift highlights how exchanges are increasingly drawn into policing behavior at layers of the technology stack that were once considered outside the scope of market regulation. Monitoring at the packet or physical layer collapses the distinction between trading rules and network engineering, suggesting that fairness concerns now reach into the plumbing of electronic markets. It also shows (or confirms) the ambiguity that surrounds many latency-optimization techniques. Eurex has referred to similar practices as “advanced speculative triggering”, while Mosaic labels them “corrupted”, reflecting how the same behavior can be framed either as aggressive optimization or as rule circumvention, depending on perspective.

The broader context is the economic structure of modern exchanges. Trading venues have strong incentives to attract HFT firms, which generate volume and pay substantial fees for co-location and proprietary data feeds. Eurex, like other major venues, offers co-location services that place trading servers physically close to the matching engine, minimizing signal travel time. While such services are formally offered on equal terms, they naturally advantage firms with the capital and technical expertise to exploit every incremental improvement. This creates a persistent tension between the exchange’s role as a neutral market operator and its role as a commercial entity monetizing speed, a tension explicitly acknowledged in European regulatory discussions under MiFID II (European Securities and Markets Authority, MiFID II Market Structures).

From a regulatory perspective, the challenge is that many of these behaviors occupy a gray area. MiFID II requires venues to ensure fair and orderly trading, but it does not specify which low-level networking techniques are permissible. As a result, enforcement often lags innovation. This pattern is familiar. Earlier phases of the high-frequency trading arms race involved microwave transmission networks shaving milliseconds off inter-exchange communication or the exploitation of subtle order-type asymmetries. In each case, intervention came only after practices became widespread and controversial rather than preemptively.

The economic consequences of nanosecond-level advantages extend beyond the profits of individual firms. When outcomes hinge on technical micro-advantages, competition shifts away from price discovery and risk transfer toward infrastructure dominance. This can reduce displayed liquidity and even discourage participation by investors unable to justify the costs of competing at the technological frontier. Market design research has consistently shown that this leads to socially inefficient investment in speed, where private incentives exceed social benefits.

Various remedies have been proposed over the years. Some market designers argue for frequent batch auctions, which aggregate orders over very short intervals and eliminate the value of infinitesimal speed advantages. Others advocate congestion pricing, explicit penalties for excessive or malformed messaging, or stricter technical standards enforced directly by exchanges. Eurex’s forthcoming packet-level monitoring may be read as a pragmatic version of this latter approach. Similar debates have unfolded in US equity markets, particularly following the Flash Crash and subsequent investigations into market microstructure vulnerabilities (CFTC–SEC, Findings Regarding the Market Events of May 6, 2010, you can read it here).

What makes the current episode particularly noteworthy is its timing. The allegations surfaced in late 2025, as regulators are once again reassessing market structure and fairness amid rising automation and concentration. The fact that Eurex announced a relevant system change shortly after the complaint became public shows how sensitive exchanges have become to reputational and regulatory risk, even as they deny wrongdoing. More broadly, the dispute confirms a structural feature of modern finance. As long as markets operate in continuous time with deterministic priority rules, participants will seek to exploit the smallest measurable advantage. The unit of competition has shrunk from seconds to milliseconds to nanoseconds, but the underlying logic has remained unchanged (technology does not eliminate the race, it merely compresses it). The open question for regulators and market operators is not whether such behavior can be eliminated entirely, but whether its private rewards continue to outweigh its social costs.

Happy new year and thank you for your work of discernment.